Introduction¶

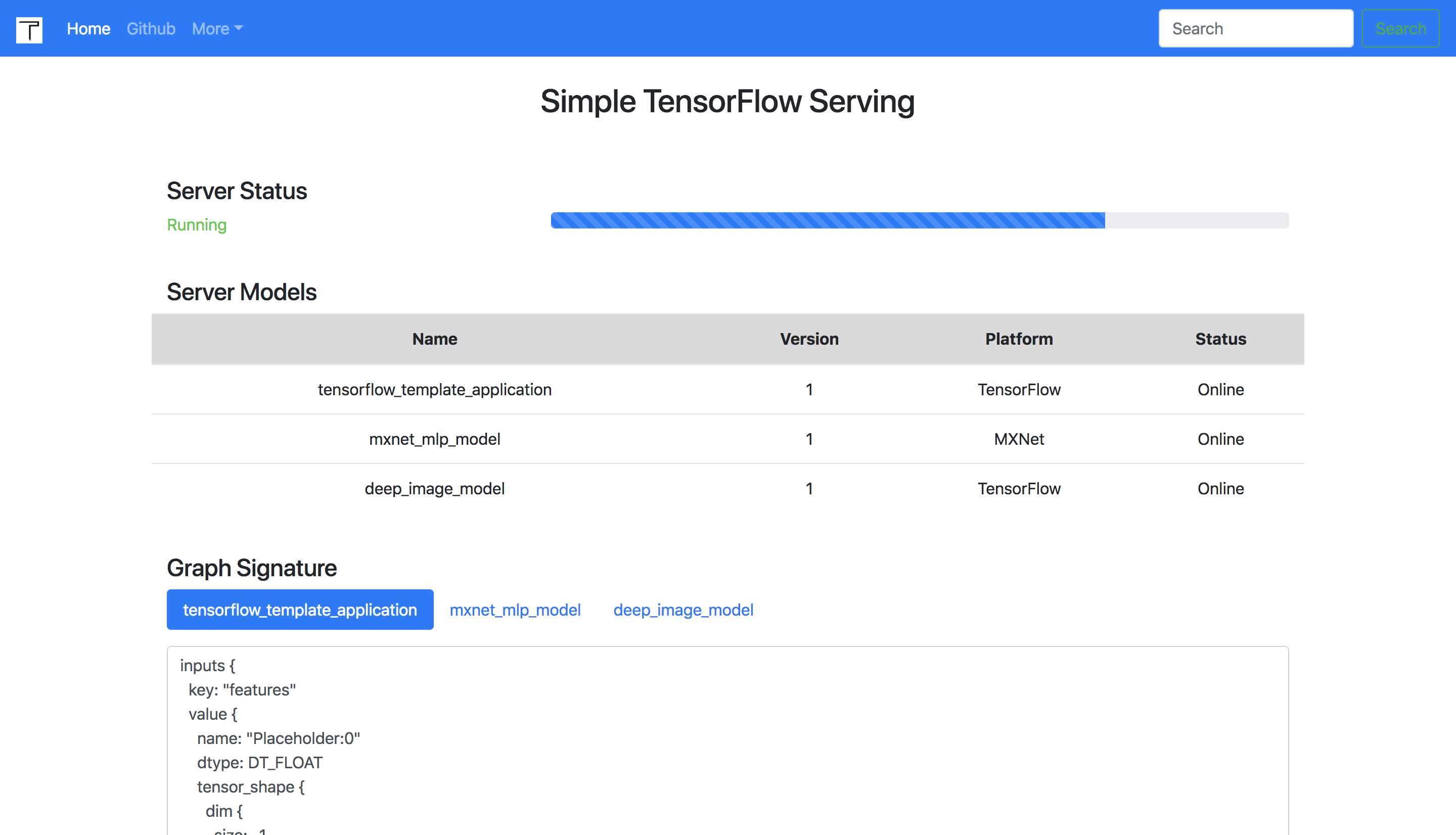

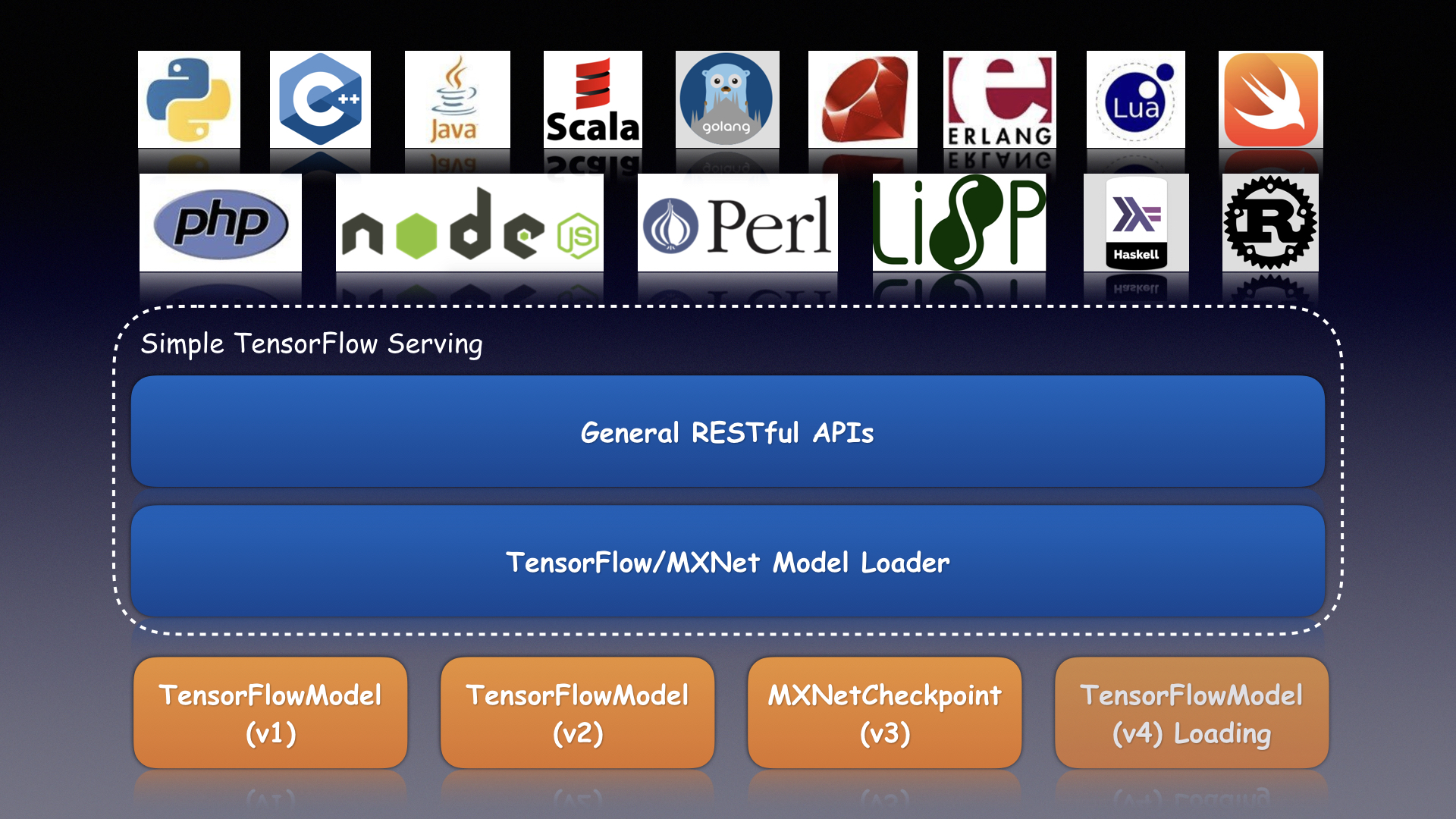

Simple TensorFlow Serving is the generic and easy-to-use serving service for machine learning models.

- [x] Support distributed TensorFlow models

- [x] Support the general RESTful/HTTP APIs

- [x] Support inference with accelerated GPU

- [x] Support

curland other command-line tools - [x] Support clients in any programming language

- [x] Support code-gen client by models without coding

- [x] Support inference with raw file for image models

- [x] Support statistical metrics for verbose requests

- [x] Support serving multiple models at the same time

- [x] Support dynamic online and offline for model versions

- [x] Support loading new custom op for TensorFlow models

- [x] Support secure authentication with configurable basic auth

- [x] Support multiple models of TensorFlow/MXNet/PyTorch/Caffe2/CNTK/ONNX/H2o/Scikit-learn/XGBoost/PMML